@

carljharris3141,

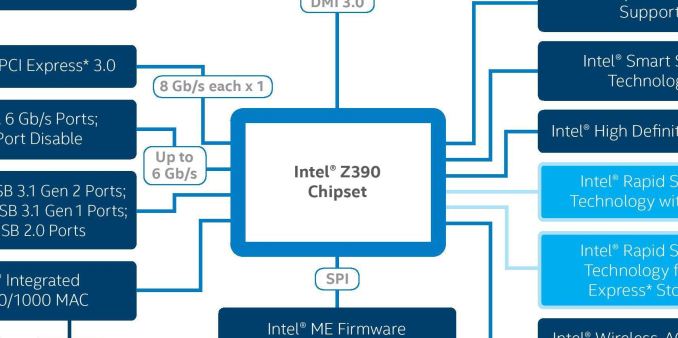

You need to bare in mind the 20 PCIe lane limitation on all Intel Desktop Class CPU's

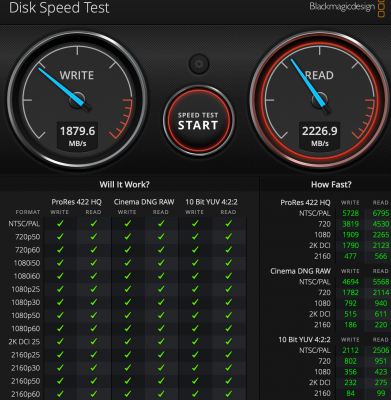

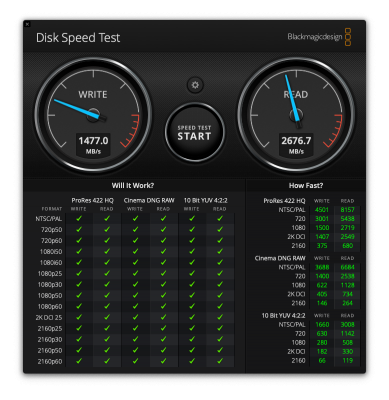

If both M.2 ports are serviced by the CPU (IE: Directly connected to the CPU) then installing another NVMe will switch your GPU to using x8 lanes rather than x16 which will reduce its performance by 10-15 %.

If the first M.2 port is serviced by the CPU and the second one is serviced by the PCH then the second M.2 will be sharing bandwidth with all the devices routed through the PCH (ethernet, audio, all USB ports, all SATA ports ..etc) which will severely limit it's speed compared to the M.2 port serviced by the CPU.

In that instance if you created a RAID 0 of both NVMe's, then the M.2 serviced by the CPU will be slowed down by the M.2 serviced by the PCH when the system is busy.

The only time using RAID with NVMe SSD's makes sense IMO is on X299 or Xeon systems as those CPU's have many more PCIe lanes available that are serviced by the CPU so you will get the full benefit.

Check your motherboards manual, there should be a block diagram which shows you how the PCIe lanes are distributed and which M.2 ports are directly connected to the CPU and which are routed through the PCH.

The short version is that if you want to get the real benefit of using two M.2 NVMe SSD in RAID 0 the both M.2 ports should be serviced directly by the CPU but it will come at the cost of reducing your GPU to x8 PCIe lanes.

If you have any other PCIe cards in the system then one or possibly both M.2 ports will be reduced to x2 PCIe lanes having the performance of the NVMe(s).

Cheers

Jay