- Joined

- May 31, 2015

- Messages

- 98

- Motherboard

- MSI GE70 2OE

- CPU

- Intel Haswell Core i5 4200M 2.5GHz

- Graphics

- Intel HD 4600 & NVIDIA GTX 765M

- Mac

- Mobile Phone

Hey guys!

Recently I updated from my perfect Sierra hackintosh laptop directly to Mojave, skipping High Sierra, and encountered a nasty color depth glitch. Since Yosemite I've been using ig-platform-id = 0x0a260006 for my Haswell Intel HD4600 Mobile. But in Mojave, despite of full QE/CI, I noticed that all gradients and shadows were garbled and not smooth, like I was playing an old 16-bit color video game. For me it was not just "cosmetic" bug which anyone else could not even notice or call it "not a big deal", it was vital for me to see the full color palette as I work with graphics, besides my eyes were getting tired fast. Background here:

https://www.tonymacx86.com/threads/...s-fixes-on-mojave.255823/page-14#post-1823669 (Post #139)

I tried to change different ig-platform-ids, tried to migrate form FakePCIID* kexts to Lilu+Whatevergreen bundle with injecting properties instead of kext patches, tried to inject ProductID=0x9C7C in Clover, patch DSDT with custom EDID generated with tosbaha's script, almost got lost in the forest of hotpatching backlight control... But all this was senseless, as the glitch was still there whatever I did.

I noticed that users with Haswell graphics were experiencing the same problems since High Sierra:

https://www.tonymacx86.com/threads/intel-hd4400-display-issues-in-high-sierra.233119/

https://www.tonymacx86.com/threads/24-bit-screen-color-depth.243582/

https://www.tonymacx86.com/threads/intel-hd4000-only-24bit-color-depth.251813/

I was even ready to roll back to Sierra as one of the guys in these threads did, but than I decided to do some more experiments with EDID, as I had a feeling that I still need to dig in that direction. And I encountered a thread on another forum, where user black.dragon74 described a method of preparing your own custom EDID via FixEDID.app. His method dealt with black screen glitch on Kabylake iGPUs in High Sierra. But as you may already guessed it helped me with my Haswell color depth glitch too.

All regards go to black.dragon74, I just describe his method here, 'cause it looks like there's no similar solution posted on tonymacx86 yet, but users really need it

Before you start, make sure that:

1. You have full QE/CI (all necessary kexts installed and correct ig-platform-id injected)

2. Backlight control implemented correctly as per Rehabman's guide https://www.tonymacx86.com/threads/...ontrol-using-applebacklightfixup-kext.218222/

3. Boot mode set to UEFI+CSM in BIOS

You will need:

1. DarwinDumper.app (attached to the post)

2. FixEDID.app (attached to the post)

3. get_edid.sh script by black.dragon74 (attached to the post)

4. Your favorite plist editor (Clover Configurator, Plist Editor Pro or similar)

Entire process is rather simple:

1. Open Darwin dumper and uncheck everything except EDID. Then click "Run" button from the left pane. It will dump your EDID and then open the output folder with the files.

2. In the output folder you will see 3 files: EDID.bin, EDID.hex, EDID.txt. We need the one in BIN format for FixEDID.app, so just copy it to Desktop for convenience.

3. Open FixEDID.app and click "Open EDID binary file" button. Choose the just copied EDID.bin file from your Desktop.

4. Below you will see a drop down menu saying "Apple iMac Display 16:10". Make a selection there according to your device (laptop or desktop) and it's screen size ratio. As I have a 16:9 laptop I chose "Apple MacBook Air Display 16:9".

5. Make sure that "Display class" below is set to "AppleBacklightDisplay" for we are overriding our internal LVDS display. (AppleDisplay is used for external displays like that on HDMI or DP)

6. Click on "Make" button. You won't see any sort of confirmation but the app has done it's work, creating a "DisplayVendorID-xxx" folder and 2 more files on your Desktop.

7. Close FixEDID and open the "DisplayVendorID-xxx" folder with a file named "DisplayProductID-xxxx" inside. For your convenience you can drag-and-drop it to the Desktop.

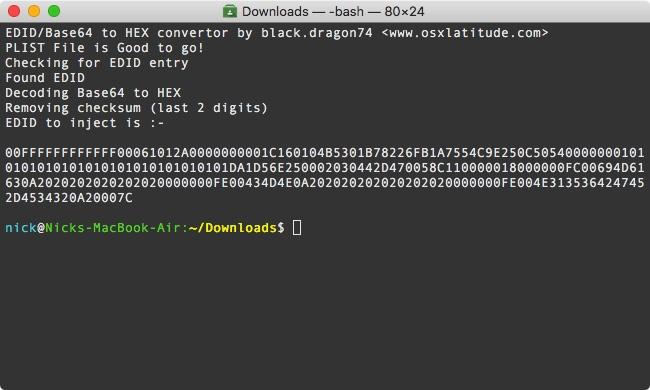

8. Now we need to extract EDID that is in base64 format and then convert it to HEX format as required by CLOVER. Assuming get_edid.sh is in Downloads folder, open terminal and type:

This script will give you EDID you need to inject in CLOVER, like:

9. Copy that EDID from Terminal window and paste it in your config.plist under Graphics > EDID > Custom. Don't forget to set "Inject" value to True (YES) under Graphics > EDID.

Note: In current official version of Clover (4700 at the moment of writing this article) injecting custom EDID won't work if you have already injected your IGPU values (e.g. device-id and ig-platform-id) in Devices > Properties section (for example when using Lilu+Whatevergreen bundle). You can use Rehabman's edition of Clover instead (available from his bitbucket site: Clover_v2.4k_r4701.RM-4961.695d25a4.zip), which is free of this bug, at least until it is officially fixed in the next versions of Clover.

10. Reboot and Voilà! You won't face that color depth issue ever again, but maybe will need to change your color profile in "Settings" to get rid of too acidic/blurry colors.

You can verify if the EDID is being injected by opening terminal and typing:

Note: Your system info probably will still say you have a 24-bit color display, but it seems normal to all MacBooks now. Visually it must be okay after this fix.

This method can also solve other EDID related display problems, if any, so feel free to use it and report here if it helped you.

IMPORTANT UPDATE

The described "Custom EDID method" seems to work only for those who have undefined Video input parameters bitmap in their original EDID. In that case byte 20 in your original EDID is set to "80", that corresponds to binary "1000000" which means undefined color depth and undefined video interface according to EDID spec.

Along with custom ProductID, VendorID and probably some other values of custom EDID (depending on one's system) the described method sets byte 20 to "95" which is 10010101 in binary, where Bit 7=1 (Digital Input), Bits 6–4 = 001 (6 bits per color channel), Bits 3–0 = 0101 (DisplayPort). And you will have something like this to fix the problem:

So if you experience the problem on Haswell IGPU check your original EDID first and then follow this guide if needed.

IF IT DIDN'T WORK

The discussion here and in the nearby thread showed that those who have some Kaby Lake IGPUs cannot fix the problem this way. If you are among them you will need Rehabman's fork of WhateverGreen to make Skylake spoofing as described by @Sniki:

-HELP- Weird ring like blur and images in Mojave

If you do it correct you will also enjoy your laptop display at it's best.

Good luck!

Recently I updated from my perfect Sierra hackintosh laptop directly to Mojave, skipping High Sierra, and encountered a nasty color depth glitch. Since Yosemite I've been using ig-platform-id = 0x0a260006 for my Haswell Intel HD4600 Mobile. But in Mojave, despite of full QE/CI, I noticed that all gradients and shadows were garbled and not smooth, like I was playing an old 16-bit color video game. For me it was not just "cosmetic" bug which anyone else could not even notice or call it "not a big deal", it was vital for me to see the full color palette as I work with graphics, besides my eyes were getting tired fast. Background here:

https://www.tonymacx86.com/threads/...s-fixes-on-mojave.255823/page-14#post-1823669 (Post #139)

I tried to change different ig-platform-ids, tried to migrate form FakePCIID* kexts to Lilu+Whatevergreen bundle with injecting properties instead of kext patches, tried to inject ProductID=0x9C7C in Clover, patch DSDT with custom EDID generated with tosbaha's script, almost got lost in the forest of hotpatching backlight control... But all this was senseless, as the glitch was still there whatever I did.

I noticed that users with Haswell graphics were experiencing the same problems since High Sierra:

https://www.tonymacx86.com/threads/intel-hd4400-display-issues-in-high-sierra.233119/

https://www.tonymacx86.com/threads/24-bit-screen-color-depth.243582/

https://www.tonymacx86.com/threads/intel-hd4000-only-24bit-color-depth.251813/

I was even ready to roll back to Sierra as one of the guys in these threads did, but than I decided to do some more experiments with EDID, as I had a feeling that I still need to dig in that direction. And I encountered a thread on another forum, where user black.dragon74 described a method of preparing your own custom EDID via FixEDID.app. His method dealt with black screen glitch on Kabylake iGPUs in High Sierra. But as you may already guessed it helped me with my Haswell color depth glitch too.

All regards go to black.dragon74, I just describe his method here, 'cause it looks like there's no similar solution posted on tonymacx86 yet, but users really need it

Before you start, make sure that:

1. You have full QE/CI (all necessary kexts installed and correct ig-platform-id injected)

2. Backlight control implemented correctly as per Rehabman's guide https://www.tonymacx86.com/threads/...ontrol-using-applebacklightfixup-kext.218222/

3. Boot mode set to UEFI+CSM in BIOS

You will need:

1. DarwinDumper.app (

2. FixEDID.app (attached to the post)

3. get_edid.sh script by black.dragon74 (attached to the post)

4. Your favorite plist editor (Clover Configurator, Plist Editor Pro or similar)

Entire process is rather simple:

1. Open Darwin dumper and uncheck everything except EDID. Then click "Run" button from the left pane. It will dump your EDID and then open the output folder with the files.

2. In the output folder you will see 3 files: EDID.bin, EDID.hex, EDID.txt. We need the one in BIN format for FixEDID.app, so just copy it to Desktop for convenience.

3. Open FixEDID.app and click "Open EDID binary file" button. Choose the just copied EDID.bin file from your Desktop.

4. Below you will see a drop down menu saying "Apple iMac Display 16:10". Make a selection there according to your device (laptop or desktop) and it's screen size ratio. As I have a 16:9 laptop I chose "Apple MacBook Air Display 16:9".

5. Make sure that "Display class" below is set to "AppleBacklightDisplay" for we are overriding our internal LVDS display. (AppleDisplay is used for external displays like that on HDMI or DP)

6. Click on "Make" button. You won't see any sort of confirmation but the app has done it's work, creating a "DisplayVendorID-xxx" folder and 2 more files on your Desktop.

7. Close FixEDID and open the "DisplayVendorID-xxx" folder with a file named "DisplayProductID-xxxx" inside. For your convenience you can drag-and-drop it to the Desktop.

8. Now we need to extract EDID that is in base64 format and then convert it to HEX format as required by CLOVER. Assuming get_edid.sh is in Downloads folder, open terminal and type:

Code:

# Change working directory

cd ~/Downloads

# Make script executable

chmod a+x get_edid.sh

# Run and get EDID to inject

./get_edid.sh ~/Desktop/DisplayProductID*This script will give you EDID you need to inject in CLOVER, like:

9. Copy that EDID from Terminal window and paste it in your config.plist under Graphics > EDID > Custom. Don't forget to set "Inject" value to True (YES) under Graphics > EDID.

Note: In current official version of Clover (4700 at the moment of writing this article) injecting custom EDID won't work if you have already injected your IGPU values (e.g. device-id and ig-platform-id) in Devices > Properties section (for example when using Lilu+Whatevergreen bundle). You can use Rehabman's edition of Clover instead (available from his bitbucket site: Clover_v2.4k_r4701.RM-4961.695d25a4.zip), which is free of this bug, at least until it is officially fixed in the next versions of Clover.

10. Reboot and Voilà! You won't face that color depth issue ever again, but maybe will need to change your color profile in "Settings" to get rid of too acidic/blurry colors.

You can verify if the EDID is being injected by opening terminal and typing:

Code:

ioreg -l | grep "IODisplayEDID"Note: Your system info probably will still say you have a 24-bit color display, but it seems normal to all MacBooks now. Visually it must be okay after this fix.

This method can also solve other EDID related display problems, if any, so feel free to use it and report here if it helped you.

IMPORTANT UPDATE

The described "Custom EDID method" seems to work only for those who have undefined Video input parameters bitmap in their original EDID. In that case byte 20 in your original EDID is set to "80", that corresponds to binary "1000000" which means undefined color depth and undefined video interface according to EDID spec.

header: 00 ff ff ff ff ff ff 00

serial number: 0d af 20 17 00 00 00 00 02 15

version: 01 03

basic params: 80 26 15 78 0a

chroma info: d8 95 a3 55 4d 9d 27 0f 50 54

established: 00 00 00

standard: 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01

descriptor 1: 2e 36 80 a0 70 38 1f 40 30 20 35 00 7e d7 10 00 00 18

descriptor 2: 00 00 00 fe 00 4e 31 37 33 48 47 45 2d 4c 31 31 0a 20

descriptor 3: 00 00 00 fe 00 43 4d 4f 0a 20 20 20 20 20 20 20 20 20

descriptor 4: 00 00 00 fe 00 4e 31 37 33 48 47 45 2d 4c 31 31 0a 20

extensions: 00

checksum: 6e

serial number: 0d af 20 17 00 00 00 00 02 15

version: 01 03

basic params: 80 26 15 78 0a

chroma info: d8 95 a3 55 4d 9d 27 0f 50 54

established: 00 00 00

standard: 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01

descriptor 1: 2e 36 80 a0 70 38 1f 40 30 20 35 00 7e d7 10 00 00 18

descriptor 2: 00 00 00 fe 00 4e 31 37 33 48 47 45 2d 4c 31 31 0a 20

descriptor 3: 00 00 00 fe 00 43 4d 4f 0a 20 20 20 20 20 20 20 20 20

descriptor 4: 00 00 00 fe 00 4e 31 37 33 48 47 45 2d 4c 31 31 0a 20

extensions: 00

checksum: 6e

Along with custom ProductID, VendorID and probably some other values of custom EDID (depending on one's system) the described method sets byte 20 to "95" which is 10010101 in binary, where Bit 7=1 (Digital Input), Bits 6–4 = 001 (6 bits per color channel), Bits 3–0 = 0101 (DisplayPort). And you will have something like this to fix the problem:

header: 00 ff ff ff ff ff ff 00

serial number: 06 10 f2 9c 00 00 00 00 1a 15

version: 01 04

basic params: 95 1a 0e 78 02

chroma info: ef 05 97 57 54 92 27 22 50 54

established: 00 00 00

standard: 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01

descriptor 1: 2e 36 80 a0 70 38 1f 40 30 20 35 00 7e d7 10 00 00 18

descriptor 2: 00 00 00 fc 00 43 6f 6c 6f 72 20 4c 43 44 0a 20 20 20

descriptor 3: 00 00 00 fe 00 43 4d 4f 0a 20 20 20 20 20 20 20 20 20

descriptor 4: 00 00 00 fe 00 4e 31 37 33 48 47 45 2d 4c 31 31 0a 20

extensions: 00

checksum: 86

serial number: 06 10 f2 9c 00 00 00 00 1a 15

version: 01 04

basic params: 95 1a 0e 78 02

chroma info: ef 05 97 57 54 92 27 22 50 54

established: 00 00 00

standard: 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01 01

descriptor 1: 2e 36 80 a0 70 38 1f 40 30 20 35 00 7e d7 10 00 00 18

descriptor 2: 00 00 00 fc 00 43 6f 6c 6f 72 20 4c 43 44 0a 20 20 20

descriptor 3: 00 00 00 fe 00 43 4d 4f 0a 20 20 20 20 20 20 20 20 20

descriptor 4: 00 00 00 fe 00 4e 31 37 33 48 47 45 2d 4c 31 31 0a 20

extensions: 00

checksum: 86

So if you experience the problem on Haswell IGPU check your original EDID first and then follow this guide if needed.

IF IT DIDN'T WORK

The discussion here and in the nearby thread showed that those who have some Kaby Lake IGPUs cannot fix the problem this way. If you are among them you will need Rehabman's fork of WhateverGreen to make Skylake spoofing as described by @Sniki:

-HELP- Weird ring like blur and images in Mojave

If you do it correct you will also enjoy your laptop display at it's best.

Good luck!

Attachments

Last edited by a moderator: