- Joined

- Sep 7, 2012

- Messages

- 9

- Motherboard

- Gigabyte H81

- CPU

- i3-4170

- Graphics

- GTX 950

- Mac

- Mobile Phone

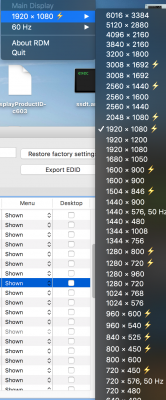

I'm becoming a pain in the ass, i know but I either don't really understand this 100% or my tv is crazy and can't accept hidpi resolutions above 1080p. By adding the native resolution of 3840 x 2160 that means adding it as a normal resolution without the hidpi or as hidpi? Doesn't the native resolution come from edid? Because if i select "best resolution for this monitor" in the settings, it defaults to 1080p.

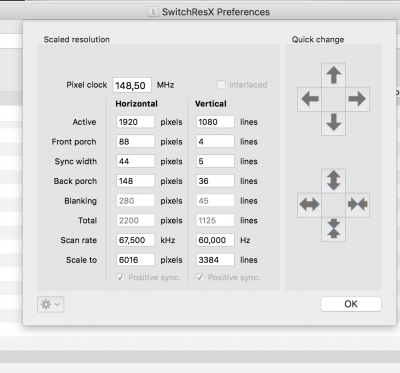

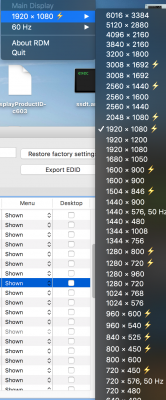

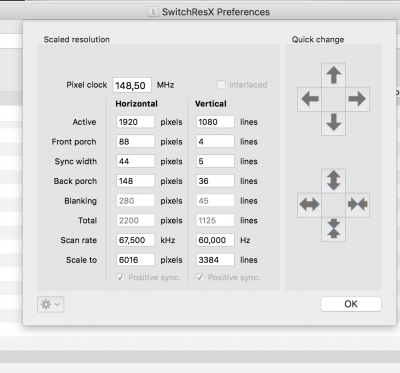

Basically i added 3008x1692(hidpi) + 6016x3384(hidpi) to the plist and you can see in the picture that i can see them with rdm or switchresx but 3008 is blurry and shitty, it's clearly not scaled down 3840x2160 and i don't know if switchresx report is accurate but if it is, basically it somehow confirms my theory.

Basically i added 3008x1692(hidpi) + 6016x3384(hidpi) to the plist and you can see in the picture that i can see them with rdm or switchresx but 3008 is blurry and shitty, it's clearly not scaled down 3840x2160 and i don't know if switchresx report is accurate but if it is, basically it somehow confirms my theory.