All SSDs have error checking, some do more than others, Apple's seem to do the most with their own custom controllers, and now we know why i guess.

When High Sierra is released i will be on APFS SSD for the root volume + striped ZFS HDDs for data. A root filesystem backup to an HFS+ hard drive will be the only redundancy, for now.

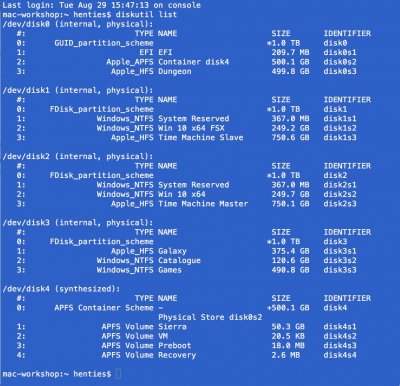

Thanks for enlightening me. In the interim I have also reviewed my original hesitancy regarding the future use of APFS as presently there is no data on any of my boot SSD volumes. My own, Resilio sync based cloud, is a SATA 3 HDD, mounted on a folder called Galaxy on every machine in my "home directory" That takes care of my mail and all of my downloads, as well as a few other things that change often such as my Lightroom Catalogue". All other data, terabytes of it, that I have accumulated over the years, and which I do not dare to loose, is stored on 2 Ubuntu headless servers and accessible via NFS. On each server I run a Raid 5 array comprising 4 x SATA 3, 2 terabyte HDD's providing me 6 terabytes effective storage per server, 12 terabytes in total. All this is in turn backed up to HDD's which are stored off site. Off site storage I consider essential as a safeguard against theft

and other catastrophes that may decide to visit me one day. All data is accessible on an on demand basis with the headless servers automatically falling asleep 5 mins. after the last macOs machine on my network has gone to sleep. That is one of the reasons why a well functioning sleep is so important to me. An Apple workstation, by waking, because of power nap and or me, force waking the workstation, will not wake my headless NAS severs. NAS wakeup is also on demand, either by trying to access some data on a server or me force waking the respective machine when needed. All that functionality is managed by a "control panel", one for each server, which I have written specifically for my specific needs and environment.

Considering all the foregoing I should not actually care too much about SSD data integrity at all since recovery is such a breeze, provided more than one properly working iHack is available on the network. NFS data activity is very fast on a gigabit network, at least fast enough for my needs. During NFS data transfers, read and write operations are split between 2 "disk" controllers and 2 "nics." one on each machine, that I believe contributes to the excellent throughput. I force NFS to use TCP as the underlying protocol, providing me also with data integrity during transfers, which is in addition to Ethernet's CRC checking. TCP data transfer integrity is built into a connection oriented protocol such as TCP, supplementing Ethernets CRC checking. Hoping I have not bored you too much with the foregoing.

Greets